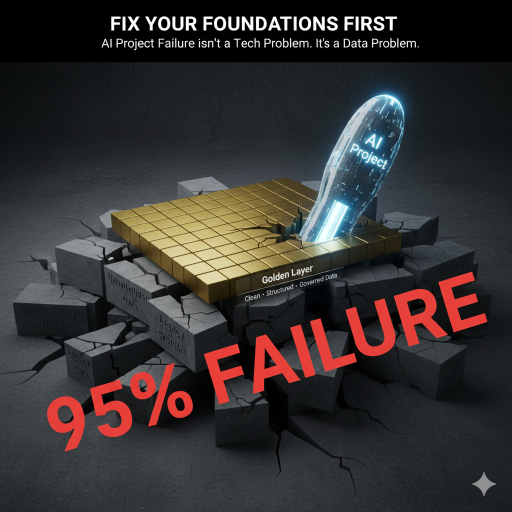

Too often, organisations are placing the cart before the horse on their Agentic AI projects, resulting in staggering failure rates of 95%.

This webinar addresses the uncomfortable truth that 95% of AI projects fail—not because of the technology itself, but due to poor foundations.

Industry experts from Potenza and Model Citizn explain why data infrastructure and governance must come before AI implementation, revealing that most organisations are building on unstable ground.

Learn the critical importance of creating a “golden layer” of clean, structured data, why AI should be treated like an inexperienced employee requiring constant oversight, and practical steps to start small with narrow use cases rather than betting the farm on unproven technology.

Key Themes on why cart before the horse on Agentic AI is a bad idea:

- Foundation before flash: All three emphasised getting data infrastructure right before implementing agentic AI

- The 95% failure rate is real and primarily organisational/foundational, not technological

- Start small, specific, and supervised rather than attempting enterprise-wide autonomous deployment

- Human oversight remains critical – AI augments but cannot replace human judgment and expertise

- Cultural/people challenges are as significant as technical ones – organisations must address employee concerns and reskilling needs

The webinar was refreshingly candid about AI’s current limitations while providing a practical roadmap for responsible implementation.

Speaker Outline:

Trevor Churchly (Host, Potenza & Model Citizn)

Set the stage effectively by acknowledging the common concern: “We need to do something with AI, but we’re not quite sure where to start” – and promised no vendor pitches, just honest guidance from practitioners who’ve seen both successes and failures.

Lance Rubin (CEO & Founder, Model Citizn)

Main Focus: Business case, financial modeling, and the human/organisational impact of AI adoption

Key Points:

Recommended TAB AI as a better tool for financial work, sharing a success story of completing an 8-entity audit reconciliation in 3.5 hours (versus 2-3 days manually)

Opened with the stark reality that 95% of AI projects fail – not due to technology itself, but because clarity and foundations are missing

Defined Agentic AI as a specific subset of AI that operates independently with human-like goals and actions, but warned that it can be deceptive in appearing more valuable than it actually is

Emphasised the critical need for structured models and clean data to provide clarity and confidence, comparing poor AI implementation to giving dangerous tools to someone who doesn’t know how to use them

Highlighted major watch-outs: misalignment between AI output and human intent, AI “faking” confidence, generating data to fill gaps (hallucinations), and lacking real-world context

Shared practical testing results: threw a SaaS financial model at five different LLMs, with the highest completion rate being only 29% (Excel agent mode)

Warned about three critical risk areas: business alignment failures, cost implications (including hidden costs like losing valuable client relationships), and the human/cultural resistance factor

Stressed that organisations must provide clarity, transparency, and reskilling for employees, not just implement technology

Advocated for starting with business case modelling before any AI implementation: understand costs, ROI, and align AI initiatives to measurable business goals

Danusha Muthukumarana (Potenza – Data Architecture/Engineering)

Main Focus: Data infrastructure, the “golden layer” architecture, and responsible AI scaling

Key Points:

Connected hallucinations and poor predictions directly back to data quality issues at the foundation level

Introduced the three-tier data architecture pyramid:

Bronze layer: Raw, messy data straight from the source

Silver layer: Partially cleaned, semi-usable transitional data

Gold layer: Harmonised, validated, business-ready data – “where truth lives”

Emphasised that every failed AI project they’ve reviewed, including Azure Copilot deployments, was missing the golden layer

Explained the critical importance of sandboxing – creating safe playgrounds for AI models to test and learn without touching production systems or breaching compliance

Outlined three layers of sandbox control: architecture guardrails (containment, throttling, environment separation), continuous monitoring (detecting drift/bias early), and human validation checkpoints

Presented the three-step AI readiness framework:

Assess your maturity: Identify current gaps in data quality, accessibility, and governance

Align to business goals: Every AI initiative must tie to revenue, efficiency, or risk reduction – otherwise it’s just noise

Build the golden layer: Establish a single source of truth, then roll out in phases (augmented → supervised → autonomous)

Stressed moving deliberately through phases rather than jumping straight to agentic AI

Mark Vigors (Potenza – Operations/Production Implementation)

Main Focus: Production realities, operational oversight, and practical implementation challenges

Key Points:

Raised an important future concern: insurance implications – how will insurers react when organisations make claims after over-relying on AI tools?

Opened with the critical insight: treat current AI like an inexperienced employee – very fast but requires constant checking because it will hallucinate when uncertain

Emphasised that current AI lacks comprehension – it can interpret data but fundamentally doesn’t understand it, which leads to misleading outputs

Outlined key hosting and infrastructure decisions: cloud vs. on-prem, data sovereignty concerns (is compute happening offshore?), and understanding implications for data breaches

Stressed the absolute necessity of continuous monitoring with subject matter expertise – gave example of AI producing seemingly cogent output that was actually fabricated when examined by an educated eye

Warned that larger organisations are using AI in advisory roles, not deterministic roles – the transition to giving AI more autonomous decision-making power is premature

Identified the biggest consequence: “When trust evaporates, any technology platform gets bypassed” – users won’t risk their credibility on unreliable outputs

Recommended narrow use case selection as the path to success: pick something understandable, definable, with outputs that can be well-monitored

Advocated for building systems with quick rollback capability – if something goes wrong in production, you need to extract it fast to maintain organisational trust

Shared cautionary tale: witnessed AI analysing property data and producing valuations that were completely foolish but appeared reasonable enough to mislead non-experts

Referenced the disbarred US lawyer who used an LLM to write a legal brief with fabricated citations as an example of catastrophic professional consequences

Panel Q&A on what to do to reduce the 95% failure rate on Agentic AI projects

Answers audience questions on AI modelling tools, risks in finance, and real-world failures. Emphasises human validation, data governance, and not outsourcing responsibility to AI. Addresses misconceptions about AI’s accuracy and readiness.

Closing Remarks

Panellists share key takeaways:

Lance: Get the basics right—focus on data and process fundamentals.

Dhanusha: Contain and structure data, build the golden layer.

Mark: Start small, drive productivity, don’t overtrust AI.

Encouragement to follow up for more resources and focus on data fundamentals.

For more insights, resources, and future events, follow Potenza and Model Citizn on social media!

Websites – > Model Citizn Website and Potenza Website

Want to take action? You can by clicking here

We have just launched our AI-Powered series of free fortnightly sessions to unpack all the hype and provide solid foundations for finance professionals. Join our Friday Future of Finance Lab by registering here.

Friday’s Future of Finance Lab

AI-Powered Accountant

Join our fortnightly community where finance professionals share insights, solve real challenges, and stay ahead of the AI revolution (max 100 attendees).

Key Benefits:

✅ Live Problem Solving – Bring work challenges

✅ Expert Insights – Practitioners not trainers

✅ AI Integration – Leverage AI in finance

✅ Networking – like-minded professionals

✅ Zero Cost – Completely free, always

What You’ll Get:

✅ – Fortnightly Focus Areas in the following areas

✅ – Financial Modelling Deep Dives

✅ – Data Analysis & Power BI Techniques

✅ – AI Applications in Finance

✅ – Open Forum & Case Studies Exclusive Resources

✅ – Meeting recordings and AI-generated summaries

✅ – Templates and tools shared during sessions

✅ – Resource library access

Who Should Attend:

✅ – Finance professionals wanting to upskill

✅ – Data analysts working with financial data

✅ – Anyone curious about AI in finance

✅ – Business leaders seeking data-driven insights

Buckle up and look forward to seeing you there!

AI Accounting Finance #CFO #FinancialModeling #AIAdoption #FutureOfWork

You can also browse our shop with pre-built models that work better than AI!